What we solved

Typing a question into a chat box is the friction most voice-first apps never remove. Botinfo closes the loop: the user taps the mic, speaks, and hears the answer back - in any of 51 languages, with a choice of male or female voice. Under the hood it is three separate engineering problems stitched end to end, not one product.

The system at a glance

A mobile app (iOS + Android) captures audio, passes it to native platform ASR, feeds the cleaned transcript through a prompt-engineering middleware, calls the OpenAI GPT-4 Turbo API, and pipes the answer into Google Text-to-Speech for spoken playback. The OpenAI key lives on a FastAPI + Postgres backend proxy, not in the app bundle. Freemium subscriptions gate the volume: 3-day free trial, then weekly, monthly, or yearly plans via StoreKit + Play Billing.

What the user experiences

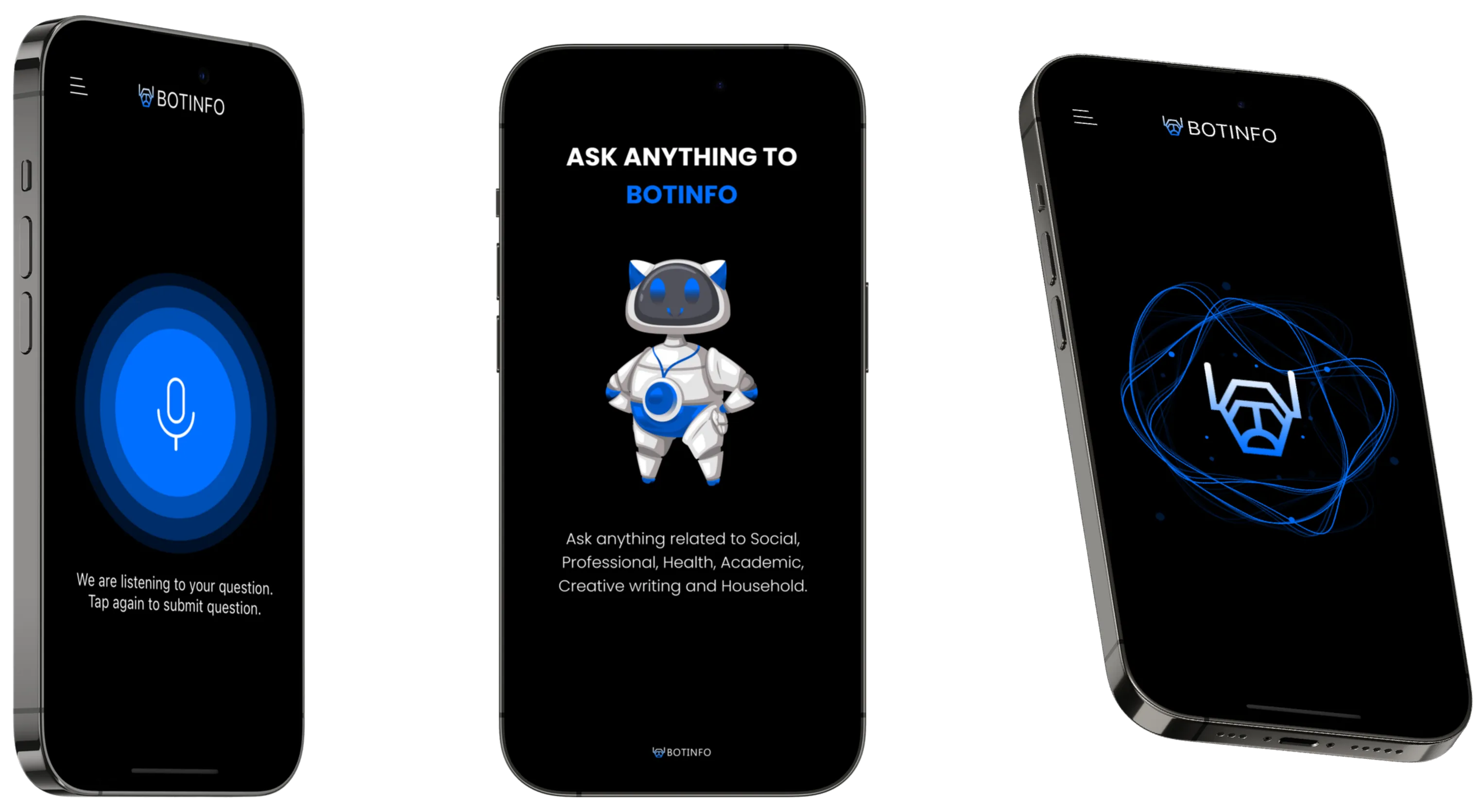

- Open the app. The home screen prompts with example topics: Social, Professional, Health, Academic, Creative writing, Household.

- Tap the mic. The screen confirms: “We are listening to your question. Tap again to submit question.”

- Speak naturally in any of 51 languages. Pick male or female TTS voice.

- The app transcribes in the background, rewrites the prompt to stay in-scope, calls GPT-4 Turbo.

- The reply streams back and plays as natural-sounding speech through the device speaker.

- Ask anything from casual chat to professional tasks - the system adapts through the prompt layer.

How we built the pieces

Voice in - native platform ASR, no extra SDK

Instead of bundling a third-party speech SDK and its gigabyte of locale models, we use the device’s native speech-recognition APIs (Apple Speech + Android SpeechRecognizer). 51-language support comes with them. Battery and install-size both benefit.

The middle - prompt engineering, not raw GPT

Botinfo does not pass raw transcripts to GPT-4 Turbo. The prompt-engineering layer classifies intent (question vs task vs creative-writing vs household-how-to) and injects a matching system prompt before the model call. That is the difference between “generic paragraph” and “useful answer for the domain the user actually asked about.”

Voice out - Google TTS

The reply text gets piped into Google Text-to-Speech with configurable voice (male / female). Pronunciation handles proper nouns, numbers, and the long tail of edge cases a phone-native TTS can fumble.

The API-key problem - FastAPI proxy, always

The wrong answer is shipping the OpenAI key in the mobile bundle. The right answer is a FastAPI + Postgres proxy: the app authenticates the device to our server, our server holds the OpenAI key, rate-limits per-user, and the key never lives in APKs, IPAs, or web bundles where anyone can decompile it. This is the fence between a weekend project and a product that accepts subscription payment.

Subscriptions - tiered freemium

Three-day free trial. Paid plans at $5–7/week, $20/month, or $179.99/year via StoreKit + Play Billing. Webhooks tell the backend in real time whether a user is paid, so rate limits flip without the app restarting.

Why Botinfo survives when OpenAI changes the rules

Three things keep Botinfo from dying the day OpenAI ships voice mode or changes pricing:

- The API key is server-held - we can swap providers without shipping an app update.

- The prompt layer is ours - we can rewrite it for GPT-4o, Claude, or a self-hosted model without retraining users.

- Voice in and voice out are separate - if Google TTS gets expensive, we swap for on-device TTS without touching the rest.

Results

- Shipped to App Store (v1.16.0, Jan 2024) and Google Play.

- 51-language support via native platform ASR.

- Male/female TTS voice options.

- Subscription tiers (weekly / monthly / yearly) live via StoreKit + Play Billing.

- No OpenAI key in the app bundle; every model call authenticated at the FastAPI proxy.

What an engineering team should take from this

If you are building any voice-first LLM product, three things are worth copying from Botinfo:

- Treat the API key as a server secret. If you remember nothing else, remember this.

- Prompt middleware is a first-class component. Version it, log it, swap models behind it.

- Use the platform’s native ASR before you reach for a third-party SDK. 51 languages come free; bundle size stays small.

Tech stack

- Mobile: Flutter (iOS + Android, single codebase - inferred)

- AI: OpenAI GPT-4 Turbo API

- Voice in: Native platform ASR (Apple Speech / Android SpeechRecognizer)

- Voice out: Google Text-to-Speech (male / female voices)

- Middleware: prompt-engineering layer routing intent to matching system prompts

- Backend: FastAPI + Postgres (proxy holding the OpenAI key, session history, rate limiting)

- Subscriptions: StoreKit + Google Play Billing

Screens